Mumbai: A new report by pi-labs has raised alarm over the rising misuse of artificial intelligence in the creation of deepfakes, revealing that 93% of explicit deepfake victims are women. The findings highlight how synthetic media, once considered a fringe technological risk, has evolved into a widespread digital threat with a distinctly gendered impact.

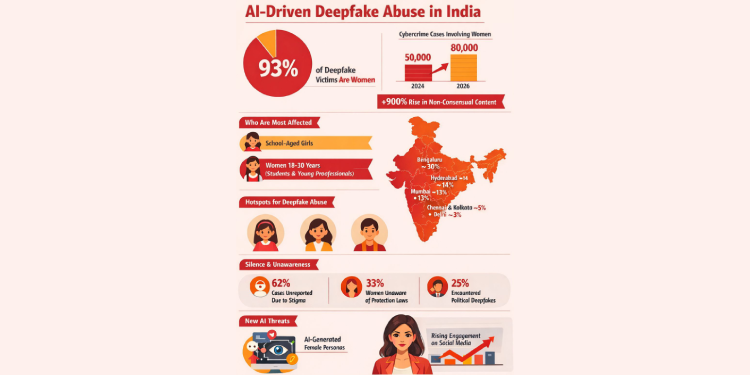

According to the report, deepfake content targeting women has increased by 900% in recent years, underscoring the growing scale of technology-driven online abuse. In India, the broader cybercrime landscape reflects a similar escalation, with complaints involving women rising from around 50,000 in 2024 to nearly 80,000 by 2026, marking an increase of roughly 60% in two years.

The study notes that almost 98% of deepfake pornography targets women, largely driven by face-swapping applications and bot networks that disproportionately target females, including school-age girls. Victims in India are often within the 18–30 age group, ranging from students to young professionals, with cities like Bengaluru reporting a rising number of such incidents.

Despite the scale of the issue, reporting remains low. Globally, 62% of deepfake abuse cases involving women go unreported due to stigma, while research in India suggests that more than one-third of women facing online harassment take no action. Many victims reportedly reduce their online presence after experiencing abuse. The report also found that 33% of women are unaware of laws designed to protect them from online harassment.

City-level data further illustrates the spread of cybercrime across India’s urban centres. Bengaluru accounts for nearly 30% of complaints, followed by Hyderabad at around 14% and Mumbai at approximately 13%. Chennai and Kolkata contribute about 5% each, while Delhi represents close to 3% of reported cases.

Commenting on the findings, Anukush Tiwari, CEO and Founder of pi-labs, said, “AI is one of the most powerful technologies of our time, but like every powerful tool, it reflects the intent of those who use it. We are witnessing a growing trust deficit in digital spaces, where identity can be manipulated within minutes and reputations can be damaged overnight. Our focus is not only on detecting synthetic media but also on restoring confidence and control. People deserve to feel safe in their own digital presence. Through responsible innovation, stronger verification systems, and greater awareness, we believe technology can shift from being a source of harm to becoming a shield that protects individuals online.”

The report highlights that image morphing and deepfake videos have emerged as the most common forms of AI misuse affecting women, with deepfake pornography remaining one of the most persistent and difficult categories to control even after being identified as manipulated content.

It also points to a new trend in the past few years: the emergence of fully AI-generated female personas that are not modelled on real individuals. These synthetic personalities are gaining significant traction on social media platforms, raising complex questions around digital credibility and trust. The report suggests that society may soon see AI-generated personalities gaining popularity comparable to real-world celebrities.

Containing deepfakes remains a major challenge due to the widespread availability of generative AI tools and the increasing number of rogue creators. Industry estimates indicate that more than 5,000 face-swap tools and over 1,000 voice-cloning applications are currently accessible online, enabling deepfake content to be created and circulated at scale.

To address the issue, pi-labs has developed pi-authentify, an AI-driven detection system that scans digital media for traces left by generative tools. By using AI against AI, the system flags suspected deepfakes and generates an authenticity score that can assist with takedown efforts or legal action. Another solution, NaMoKavach, allows users to submit suspicious media through a secure portal and receive a confidential verification assessment within two working days.

The report concludes that minimising digital footprints and adopting deepfake detection tools remain among the most practical preventive steps. As synthetic media continues to evolve, the findings underline the urgent need for coordinated action from individuals, technology companies and digital platforms to address what is increasingly becoming a critical online safety issue.